The Washington Post staff decided to test the listening accuracy of the first two virtual personal assistants to be available on the market – Google Assistant and Amazon Alexa – to find out if and what differences there are between them.

The goal of the testers was to find out how the interaction with these two devices takes place in real life and to this end they used the Google Home and Amazon Echo smart ludspeakers.

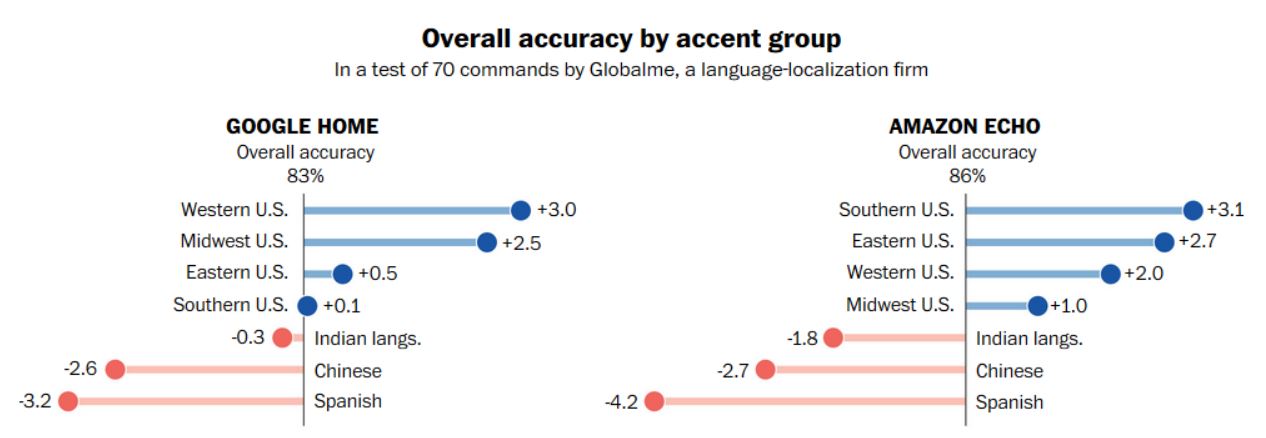

Google Assistant and Amazon Alexa have some difficulty understanding some accents.

The test was divided into 70 commands (such as “Start playing Queen”, “Add a new appointment” and “How close are you to the nearest Walmart?”) Given to the two digital assistants, with the aim of establishing how much user accent may affect the ability to understand the device.

Google Assistant had a total accuracy rate of 83% while that of Alexa was slightly better (86%)

Well, Google Assistant had a total accuracy rate of 83% while that of Alexa was slightly better (86%). The latter did a bit better with American accents than Google Assistant while he did a little worse with the Indian, Chinese and Spanish.